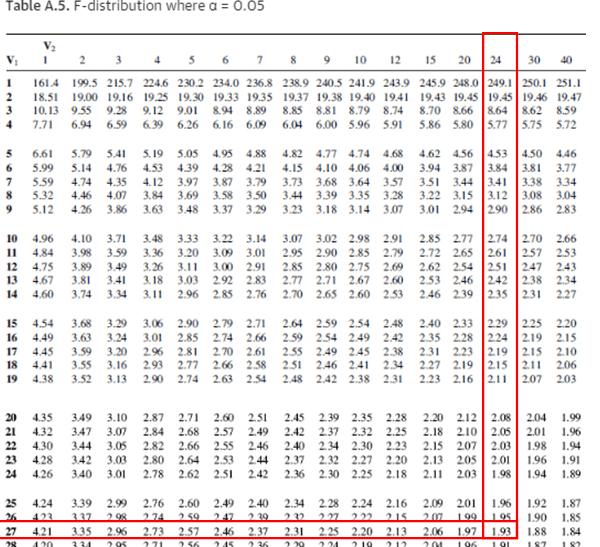

Similarly, in regression analysis, DoF help quantify the amount of information “used” by the model, thus playing a pivotal role in determining the statistical significance of predictor variables and the overall model fit. For instance, in a chi-square test, DoF are used to define the shape of the chi-square distribution, which in turn helps us determine the critical value for the test. This concept becomes increasingly important as we delve into more complex statistical tests and models. In this case, you have four DoF because four values can freely vary. If you know the values of four of these data points, you can easily calculate the value of the fifth data point because it’s constrained by the average. Let’s consider a simple example to illustrate this concept: you have a dataset containing five data points, and you know their average (mean). Simply put, it provides an idea of how much information you have at your disposal to estimate statistical parameters. Understanding Degrees of Freedomĭegrees of freedom (DoF) is a slightly abstract statistical concept that refers to the number of values in a statistical calculation that are free to vary. Degrees of freedom play a crucial role in hypothesis testing and determining the appropriate statistical distribution for inference.In general, it is determined by the sample size minus the number of parameters being estimated.Degrees of freedom represent the number of observations or data points that are free to vary in statistical analysis.If you would like to cite this website, you can use the citation below, it's APA. Please contact me with questions and suggestions at requests welcome on repo, where formulae alongside sources can be found.

If they do not converge, try another optimization method from the drop down menu above. Var_re is the average of the random effects variance, sigma_squared is the variance within clusters,Īnd var_fixed is the variance explained by the fixed effects in the model.Ĭheck the results for convergence. Where R^2 m, R^2_c are the marginal and conditional R-squared's respectively, The marginal R-squared attempts to capture the variance explained by the fixed effects in the model, and the conditional R-squared attempts to capture the variance explained by both the fixed effects and random effects. These measures achieve those properties to varying degrees. These are pseudo-R-squared's as they attempt to recreate the properties of R-squared from OLS. Estimation and Inference for Measures of Association. A better alternative might be mid-p, the default option, which is recommended by Agresti (2013, p. 85), although it may be highly conservative (Agresti, 2013, p. When this occurs for the odds ratio, you can use the Fisher method (Jewell, 2004, p. If it produces markedly different results in the point estimates and the CI from Wald, then the sample size is not large enough for Wald (Jewell, 2004, p. One can use the small method as a diagnostic. Given a large sample size, the Wald method suffices (Jewell, 2004). I use the short name for the methods (contained in parenthesis in the dropdown menu) in these recommendations. If the outcome is negative, such that a reduction is desired, select yes to compute the relative risk reduction (RRR) and the absolute risk reduction (ARR).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed